I’ve been thinking about reviving the blog and as maybe a way of easing back in I’ve come up with some short post ideas. As usual, these are a bit half-baked, so YMMV.

A common way of generating a “hook” in a technical talk is to say “actually, this is really an old idea.” There are two examples of this that come to mind for me, group testing and randomized response. In both of these topics, there is a “classic paper” with an “interesting historical anecdote” that generates a kind of factoid in the audience’s mind. Unfortunately, the factoid that gets stored is often incorrect.

Group testing refers to the process of finding a (small) number of “defective elements” a larger set of elements by testing groups of elements. The assumption is that the test you have is sensitive enough to flag a group as containing a defective element. This was first proposed in Robert Dorfman’s 1943 paper The Detection of Defective Members of Large Populations in The Annals of Mathematical Statistics. He introduced group testing with the application of screening for syphilis in the United States Public Health Service and the Selective Service System during WWII. A syphilis test (the Wasserman test) which is sufficiently sensitive could be used on pooled blood samples: if the test was negative the whole group is clear and if the group is positive you could test individuals in the group or further subdivide.

As noted in this paper by Gilbert and Strauss, the “Selective Service System did not put group testing for syphilis into practice because the Wassermann tests were not sensitive enough…” when pooled. That paper doesn’t have a citation but you can find it in the book by Du and Hwang:

Unfortunately, this very promising idea of grouping blood samples for syphilis screening was not actually put to use. The main reason, communicated to us by C. Eisenhart, was that the test was no longer accurate when as few as eight or nine samples were pooled. Nevertheless, test accuracy could have been improved over years, or possibly not a serious problem in screening for another disease. Therefore we quoted the above from Dorfman in length not only because of its historical significance, but also because at this age of a potential AIDS epidemic, Dorfman’s clear account of applying group testing to screen syphilitic individuals may have new impact to the medical world and the health service sector.

It seems that group testing was not used for the syphilis screenings. Most people are careful to say it was proposed and not used but without “closing the loop” people learning about it for the first time could be misled. Group testing has been used for many other diseases, most recently in some approaches for COVID screening.

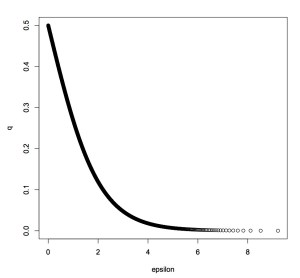

The second example is randomized response, which is a technique for providing plausible deniability to survey respondents. A surveyer asks a sensitive binary question to an interviewee. The interviewee’s true answer is X∈{0,1}, samples a Bernoulli(p) random variable Z∈{0,1} (“flips a biased coin”) and responds with Y=X⊕Z where ⊕ is addition modulo 2. Randomized response was proposed by Stanley L. Warner in his 1965 JASA paper Randomized response: A survey technique for eliminating evasive answer bias. Talks on differential privacy (especially local differential privacy) often trot out Warner’s paper as an example of how differential privacy has appeared “classically.” I have done this myself.

Unfortunately, as a 2015 JASA paper of Blair, Imai, and Zhou notes:

Despite the wide applicability of the randomized response technique and the methodological advances, we find surprisingly few applications. Indeed, our extensive search yields only a handful of published studies that use the randomized response method to answer substantive questions…

The earliest study they could find was by Madigan et al. from 1976, who looked at a Misamis Oriental province in northern Mindanao (Philippines) and the prevalance of hiding deaths from official counts. So it seems randomized response was not implemented for around a decade after being proposed.

These examples show how easy it is to create misunderstandings by using anecdnotes about prior work in talks. I am certainly guilty of both misunderstanding the actual facts and perhaps misrepresenting what actually happened after these cool ideas were first proposed. We all know that the gap between theory and practice can be large, but somehow these fun stories make us a bit less careful.