There’s this nice calculation in a paper of Shannon’s on optimal codes for Gaussian channels which essentially provides a “back of the envelope” way to understand how noise that is correlated with the signal can affect the capacity. I used this as a geometric intuition in my information theory class this semester, but when I described it to other folks I know in the field, they said they hadn’t really thought of capacity in that way. Perhaps it’s all of the AVC-ing I did in grad school.

Suppose I want to communicate over an AWGN channel

where and

satisfies a power constraint

. The lazy calculation goes like this. For any particular message

, the codeword

is going to be i.i.d.

, so with high probability it has length

. The noise is independent and

so

with high probability, so

is more-or-less orthogonal to

with high probability and it has length

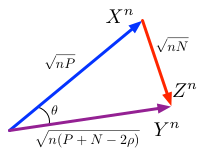

with high probability. So we have the following right triangle:

Looking at the figure, we can calculate using basic trigonometry:

,

so

,

which is the AWGN channel capacity.

We can do the same thing for rate-distortion (I learned this from Mukul Agarwal and Anant Sahai when they were working on their paper with Sanjoy Mitter). There we have Gaussian source with variance

, distortion

and a quantization vector

. But now we have a different right triangle:

Here the distortion is the “noise” but it’s dependent on the source . The “test channel” view says that the quantization

is corrupted by independent (approximately orthogonal) noise to form the original source

. Again, basic trigonometry shows us

.

Turning back to channel coding, what if we have some intermediate picture, where the noise slightly negatively correlated with the signal, so ? Then the cosine of the angle between

and

in the picture is

and we have a general triangle like this:

Where we’ve calculated the length of using the law of cosines:

.

So now we just need to calculate again. The cosine is easy to find:

.

Then solving for the sine:

and applying our formula, for ,

If we plug in we get back the AWGN capacity and if we plug in

we get the rate distortion function, but this formula gives the capacity for a range of correlated noise channels.

I like this geometric interpretation because it's easy to work with and I get a lot of intuition out of it, but your mileage may vary.