I went to all the plenaries this year except Vince Poor’s (because I was still sick and trying to get over it). I was a bit surprised this year that the first three speakers were all from within the IT community — I had been used to the plenaries giving an outsider’s perspective on problems related to information theory. The one speaker from outside, Emery Brown, was asked by Andrea Goldsmith “what can information theorists do to help your field?” I learned a lot from the talks this year though, and the familiarity of the material made it a more gentle introduction to the day for my sleepy brain.

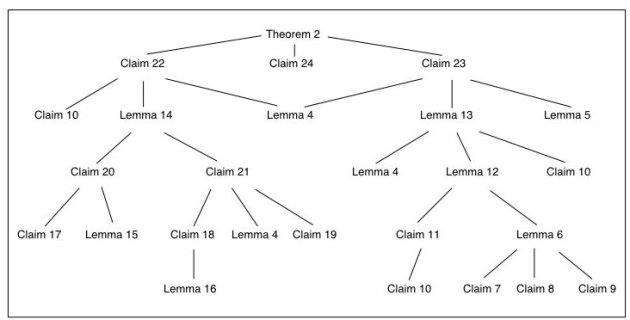

Michelle Effros talked about Network Source Coding, asking three questions : what is a network source code, why does the network matter, and what if separation fails? She emphasized that in the latter case, bits and data rate are still useful abstractions for doing negotiations with higher layers of the network stack. For me, this brought up an interesting question — are there other currencies that may also make sense for wireless applications, for example? Her major proposal for moving forward was to “question the questions.” Instead of asking “what is the capacity region” we can ask “is the given rate tuple supportable?” She also emphasized the importance of creating a hierarchy of problem reductions (as in complexity theory). To me, that is already happening, so it didn’t seem like news, but maybe the idea was to formalize it a bit more (c.f. The Complexity Zoo). The other proposal, also related to CS theory, was to come up with epsilon-approximation arguments. The reason this might be useful is that it is hard in general to implement “optimal” code constructions, and she gave an example from her own work of finding a (1 + epsilon)-approximation for vector quantization.

Shlomo Shamai talked about the Gaussian broadcast channel, discussing first the basics, degradation, and superposition, and then how fading makes the whole problem much more difficult. He apologized in advance for supposedly providing an idiosyncratic look at the problem, but I thought it was an excellent survey. Although he pointed out a number of important open problems in broadcasting (how can we show Gaussian inputs are optimal?), I was hoping he would make more of a call to arms or something. Of course, on Tuesday I was heavily feverish and miserable, so I most likely missed a major point in his talk.

As I said, I missed Vince Poor’s talk, but I think I saw a version of the same material at the Başarfest, and it used game theory methods to study resource allocation for networks (like power allocation). I needed the extra rest to get healthy, though.

Sergio Verdú gave the Shannon Lecture, and titled his talk “teaching it.” He made a few new proposals for how information theory should be taught and presented. One of the strengths he identified was the number of good textbooks which have come out about Information Theory, many of which were written by previous Shannon lecturers. If I had to sum up the main changes he proposed, it was to de-emphasize the asymptotic equipartition property (AEP), separate fixed-blocklength analysis from asymptotics, don’t present only memoryless sources and channels, and provide more operational characterizations of information quantities. He drew on a number of examples of simplified proofs, ways of getting bit-error converses without Fano’s Inequality, the importance of the KL-divergence, the theory of output statistics, and the usefulness of the normal approximation in information theory. I agreed with a lot of his statements from a “beauty of the subject” point of view, but I don’t know that it would make information theory more accessible to others, necessarily.

Emery Brown gave the only non-information theory talk: he addressed how to design signal processing algorithms to decipher brain function. Starting from point processes and their discretization to get spike functions and rate functions, he gave specific examples of trying to figure out how to track “place memory” neurons in the rat hippocampus. The hippocampus contains neurons that remember places (and also orientation) — after walking through some example of mazes in which a neuron would fire only going one direction down a particular segment of the maze, he showed an experiment of a rat running around a circular area and tried to use the measurements on a set of neurons to infer the position of the mouse. Standard “revcor” analysis just does a least squares regression of spikes onto position to get a model, but he used a state-space model to address the dynamics and some Bayesian inference methods to get significantly better performance. He then turned to how we should model the receptive field of a neuron evoloving over time as a rat learns a task. When asked about what information theorists can bring to the table, he said that getting into a wet lab and working directly with experimentalists and bringing a more principled use of information measures in neuroscience data analysis would be great contributions. It was a really interesting talk, and I wish the crowd had been larger for it.