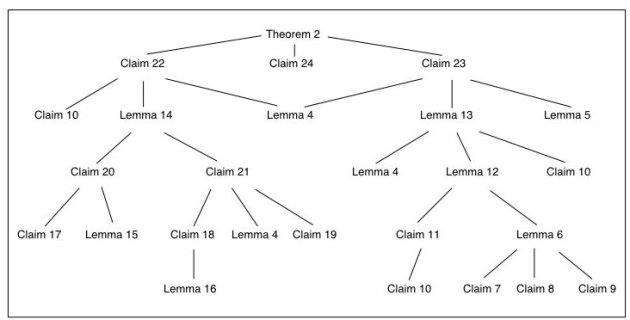

This last semester we had a reading group on percolation theory, using the new book by Bollobas and Riordan. The crowning moment of our discussions was a 3-week trek through the proof of Smirnov’s theorem, which shows the conformal invariance of crossing probabilities for the triangular lattice. The book apparently contains the first “complete” proof in print. It’s quite involved, and for my part of the presentation I made the following flowchart of the structure of the proof:

Daily Archives: July 10, 2007

ISIT 2007 : large random networks

Scaling Bounds for Function Computation over Large Networks (Sundar Subramanian, Piyush Gupta, and Sanjay Shakkottai) : This paper essentially looked at the scaling laws for the “refresh rate” of networks in which a collector node wants to compute a function f(x1, x1, …, xn) of measurements at the nodes of a network. The network has bounded degree and there is a difference in scaling between type-sensitive (mean, mode) and type-threshold (max, min) functions. They show that deterministic bounds on type-sensitive functions are not possible in general, but probabilistic bounds are possible. By using a joint source-channel coding strategy, for AWGN networks they obtain constant refresh rates for small path-loss exponents.

Characterization of the Critical Density for Percolation in Random Geometric Graphs (Zhenning Kong and Edmund M. Yeh) : Since we had a reading group on percolation theory this semester, this talk felt right up my alley. Although using Monte Carlo techniques we know the critical threshold (density) for percolation (formation of a giant connected component) to happen in random geometric graphs, the analytical bounds are quite loose. This paper gets tighter analytical bounds by doing some smarter bounding of the “cluster coefficients,” which come from looking at the geometry of the percolation model.

ISIT 2007 : feedback

Communication with Feedback via Posterior Matching (Ofer Shayevitz and Meir Feder) : This work was an attempt to come up with a common framework and generalization of the Horstein and Schalkwijk-Kailath feedback coding schemes, in which the encoder uses the feedback to track the decoder and “steer” it to the correct message. They come up with an idea, called “posterior matching” and apply it to DMCs to show that a simple “steering” encoder can also achieve the empirical mutual information of the channel I(Q,W) using the posterior CDF at the decoder. It’s a pretty cool result, in the line of “A is like B in this way,”

Broadcasting with Feedback (Sibi Raj Bhaskaran) : This addresses the question of broadcasting when feedback is only available to one user. In a degraded Gaussian broadcast setting, if the feedback is from the strong user, you can use a Gaussian codebook for the weak user with a Schalkwijk-Kailath scheme for the strong user to get an increased capacity region. This is one of those papers that I’ll have to read a bit more carefully…

The Gaussian Channel with Noisy Feedback (Young-Han Kim, Amos Lapidoth, and Tsachy Weissman) : This talk was about error exponents for the AWGN channel with noisy feedback. By using some change-of-measure and genie arguments, they show a non-trivial upper bound, and a three-phase coding scheme can give a lower bound which scales like (feedback noise variance)-1. Unlike the perfect feedback case, where the exponent is infinite, both bound are finite. Furthermore, they show that linear coding for noisy feedback will not get any rate.

Error Exponent for Gaussian Channels with Partial Sequential Feedback (Manish Agarwal, Dongning Guo, and Michael L. Honig) : In this talk, they take an AWGN channel in which only a fraction of the received symbols are fed back and ask for the error exponent. They propose an achievable scheme that uses the slots with feedback separately from the slots without. With feedback, they try to steer the decoder into a favorable position. and then use a forward error-correcting code to get the rest of the rate.

A Coding Theorem for a Class of Stationary Channels with Feedback (Young-Han Kim) : The class of channels under consideration are those in which there is order m memory, and in this case the capacity is given by a normalized directed mutual information. The main interest for me was in the proof strategy, which used Shannon strategies and something called the “Nedoma decomposition,” which I have to read about a bit more… except that it’s in German, so I have to brush up my German first.

Capacity of Markov Channels with Partial State Feedback (Serdar Yüksel and Sekhar Tatikonda) : This was about partial feedback in that the state is quantized and sent back to the encoder. For a Markov state transition with some technical mixing conditions, they find a single-letter expression for the capacity with feedback, and that a sliding-window encoder is “almost good enough,” which is good news for practical coding schemes. They also use some nice dynamic programming with the directed mutual information as the running costs, which merits some closer inspection, I think.