I am reading this nice paper by Harsha et al on The Communication Complexity of Correlation and some of their results rely on the following cute lemma (slightly reworded).

Let  and

and  be two distributions on a finite set

be two distributions on a finite set  such that the KL-divergence

such that the KL-divergence  . Then there exists a sampling procedure which, when given a sequence of samples

. Then there exists a sampling procedure which, when given a sequence of samples  drawn i.i.d. according to

drawn i.i.d. according to  , will output (with probability 1) an index

, will output (with probability 1) an index  such that the sample

such that the sample  is distributed according to the distribution

is distributed according to the distribution  and the expected encoding length of the index

and the expected encoding length of the index  is at most

is at most

where the expectation is taken over the sample sequence and the internal randomness of the procedure.

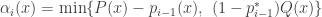

So what is the procedure? It’s a rejection sampler that does the following. First set  for all

for all  and set

and set  as well. Then for each

as well. Then for each  do the following:

do the following:

- Output

with probability

with probability  .

.

Ok, so what is going on here? It turns out that  is tracking the probability that the procedure halts at

is tracking the probability that the procedure halts at  with

with  (this is not entirely clear at first). Thus

(this is not entirely clear at first). Thus  is the probability that we halted before time

is the probability that we halted before time  and the sample is

and the sample is  , and

, and  is the probability that we halt at time

is the probability that we halt at time  . Thus

. Thus  is the probability that the procedure gets to step

is the probability that the procedure gets to step  . Getting back to

. Getting back to  , we see that

, we see that  with probability

with probability  and we halt with probability

and we halt with probability  , so indeed,

, so indeed,  is the probability that we halt at time

is the probability that we halt at time  with

with  .

.

What we want then is that  . So how should we define

. So how should we define  ? It’s the minimum of two terms : in order to stop at time

? It’s the minimum of two terms : in order to stop at time  such that

such that  , the factor

, the factor  is at most

is at most  . However, we don’t want to let

. However, we don’t want to let  exceed the target probability

exceed the target probability  , so

, so  must be less than

must be less than  . The authors say that in this sense the procedure is greedy, since it tries to get

. The authors say that in this sense the procedure is greedy, since it tries to get  as close to

as close to  as it can.

as it can.

In order to show that the sampling is correct, i.e. that the output distribution is  , we want to show what

, we want to show what  . This follows by induction. To get a bound on the description length of the output index, they have to show that

. This follows by induction. To get a bound on the description length of the output index, they have to show that

![\mathbb{E}[ \log i^{\ast} ] \le D(P \| Q) + O(1)](https://s0.wp.com/latex.php?latex=%5Cmathbb%7BE%7D%5B+%5Clog+i%5E%7B%5Cast%7D+%5D+%5Cle+D%28P+%5C%7C+Q%29+%2B+O%281%29&bg=ffffff&fg=333333&s=0&c=20201002) .

.

This is an interesting little fact on its own. It comes out because

and then expanding out ![\mathbb{E}[ \log i^{\ast} ] = \sum_{x} \sum_{i} \alpha_i(x) \cdot \log i](https://s0.wp.com/latex.php?latex=%5Cmathbb%7BE%7D%5B+%5Clog+i%5E%7B%5Cast%7D+%5D+%3D+%5Csum_%7Bx%7D+%5Csum_%7Bi%7D+%5Calpha_i%28x%29+%5Ccdot+%5Clog+i&bg=ffffff&fg=333333&s=0&c=20201002) .

.

One example application of this Lemma in the paper is the following problem of simulation. Suppose Alice and Bob share an unlimited amount of common randomness. Alice has some  drawn according to

drawn according to  and wants Bob to generate a

and wants Bob to generate a  according to the distribution

according to the distribution  . So ideally,

. So ideally,  have joint distribution

have joint distribution  . They can both generate a sequence of common

. They can both generate a sequence of common  according to the marginal distribution of

according to the marginal distribution of  . Then Alice tries to generate the distribution

. Then Alice tries to generate the distribution  according to this sampling scheme and sends the index

according to this sampling scheme and sends the index  to Bob, who chooses the corresponding

to Bob, who chooses the corresponding  . How many bits does Alice have to send on average? It’s just

. How many bits does Alice have to send on average? It’s just

![\mathbb{E}_{P(x)}[ D( P(y|x) \| P(y) ) + 2 \log( D( P(y|x) \| P(y) ) + 1) + O(1) ]](https://s0.wp.com/latex.php?latex=%5Cmathbb%7BE%7D_%7BP%28x%29%7D%5B+D%28+P%28y%7Cx%29+%5C%7C+P%28y%29+%29+%2B+2+%5Clog%28+D%28+P%28y%7Cx%29+%5C%7C+P%28y%29+%29+%2B+1%29+%2B+O%281%29+%5D&bg=ffffff&fg=333333&s=0&c=20201002) .

.

which, after some love from Jensen’s inequality, turns out to be upper bounded by

![I(X ; Y) + 2 \log( I(X; Y) + 1) + O(1) ]](https://s0.wp.com/latex.php?latex=I%28X+%3B+Y%29+%2B+2+%5Clog%28+I%28X%3B+Y%29+%2B+1%29+%2B+O%281%29+%5D&bg=ffffff&fg=333333&s=0&c=20201002) .

.

Pretty cute, eh?

applicants, I have reconstructed

of them, since I can’t imagine that they would interview more than

. Ok that was a little more nerdy than I intended. I do think that they deserve a wag of the finger.